Are you in the process of developing a sophisticated AI solution for your business and looking to expedite its launch? Consider opting for pre-built, versatile Large Language Models (LLMs)! However, before diving in, it’s crucial to be prepared for the challenges that generic LLMs can present, such as hallucinations, failures in logical reasoning, vulnerabilities in data security and privacy, as well as outdated or generalized knowledge. While off-the-shelf LLMs like ChatGPT may appear cost-effective and time-efficient initially, they can lead to significant costs down the line.

In such scenarios, creating a proprietary Large Language Model (LLM) can offer substantial benefits.

Custom LLM development services provide the opportunity to utilize proprietary datasets, fine-tune outputs for specific use cases, and deliver AI solutions that are precise and in alignment with business objectives.

Whether your goal is to automate customer support, extract insights from complex documents, or enhance internal knowledge management, a well-crafted LLM has the potential to revolutionize operations and decision-making processes.

This comprehensive guide delves into all aspects of developing LLMs, from understanding the various types and their business applications to a step-by-step process. By the end, you’ll have a clear roadmap for implementing enterprise-grade AI that generates tangible value.

KEY TAKEAWAYS

- While off-the-shelf models are powerful, a domain-specific LLM can offer more accurate, context-aware, and compliant AI solutions tailored to your business.

- To embark on building your own LLM, it’s essential to start by defining the use case, target audience, and success metric as part of your strategic groundwork.

- The quality of the data directly impacts the performance of the LLM, so ensuring clean, curated, and well-structured datasets is non-negotiable.

- Fine-tuning is often the quickest route to enhancing the performance of LLMs.

- Ongoing human feedback is crucial for training LLMs to ensure accuracy, safety, and relevance.

If you’re interested in developing a customized LLM trained on your datasets to deliver results tailored to your needs, consider hiring AI developers from MindInventory!

What is LLM?

LLM, which stands for Large Language Models, refers to a form of artificial intelligence that utilizes AI/ML models to comprehend, interpret, and interact in human language. Essentially, an LLM is a neural network, typically based on the Transformer architecture, that learns patterns, context, and relationships within text.

Therefore, an LLM can function as a scalable knowledge worker, automating repetitive tasks, enhancing decision-making speed, and extracting insights from extensive amounts of unstructured text.

Some prominent examples of LLMs include GPT-4 by OpenAI, Gemini by Google DeepMind, Claude 3 by Anthropic, LLaMA 3 by Meta, and Mistral 7B by Mistral AI.

Types of LLMs Businesses Can Build

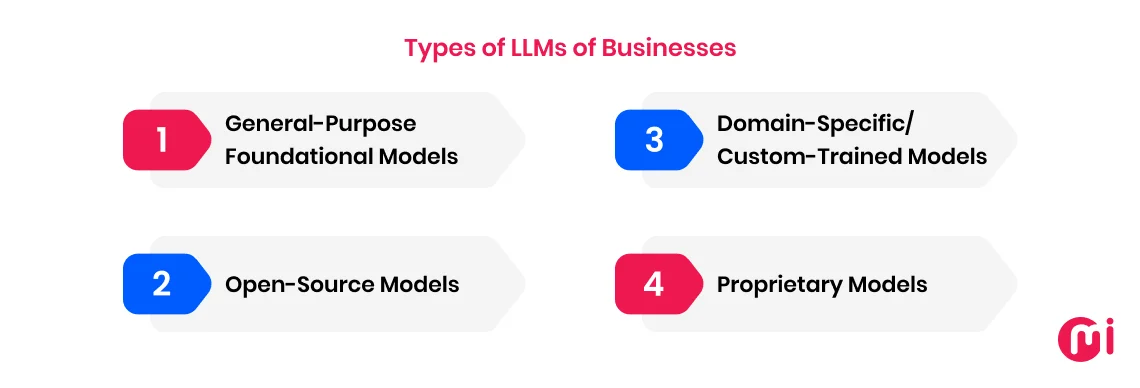

Large language models (LLMs) are classified based on architecture, training data and purpose, and modality types, following academic principles. The primary types of LLMs encompass decoder-only, encoder-only, encoder-decoder, and multimodal models, each tailored for specific natural language processing tasks and applications.

For businesses seeking practical LLM types, considerations should include general-purpose, open-source, domain-specific, or proprietary models.

1. General-Purpose Foundational Models

These are extensive, pre-trained base models designed to handle a wide array of tasks without necessitating a rebuild from scratch. They serve as the backbone of numerous AI/ML applications by offering versatile reasoning, comprehension, and text generation capabilities.

Examples: Gemini, GPT, Claude, and PaLM.

2. Open-Source Models

These models are publicly accessible, permitting ML developers and organizations to inspect, modify, and retrain them for specific requirements, often at a lower cost. They typically represent smaller or community-supported variations of commercial LLMs but encourage innovation and transparency.

Examples: LLaMA 3, Mistral 7B, Falcon, and RedPajama.

3. Domain-Specific/Custom-Trained Models

This category of LLM is trained on specialized datasets for particular industries, such as healthcare, finance, legal, etc. They offer heightened accuracy in niche contexts where industry terminology and compliance are paramount. Consequently, they are beneficial for tasks demanding specialized knowledge, compliance phrasing, or in-depth understanding of a specific field.

Examples: BloombergGPT (finance), Med‑PaLM 2 (healthcare), and LegalBERT (legal).

4. Proprietary Models

As implied by the name, these models are developed and owned by specific companies. Proprietary LLM models are presented as closed-source services, primarily accessible through APIs. They are trained on private, often curated datasets and fine-tuned for high accuracy and compliance with industry standards.

These models deliver state-of-the-art performance, reliable enterprise-grade support, regular updates, and seamless integration into existing business systems, making them ideal for organizations focused on stability and ease of deployment.

Examples: Claude 3.5 Sonnet and Gemini 1.5 Pro.

Why Generic LLMs Fall Short in Enterprise Environments

Generic large language models (LLMs) fall short in enterprise environments primarily due to limitations in handling complex, large-scale, and domain-specific tasks that enterprises require.

Aside from that, key reasons include:

- Limitation in processing large amounts of text at once.

- Lack of familiarity with a company’s unique data, terminology, and processes.

- Deficiency in critical features like prompt governance, access control, and data isolation, essential to meet enterprise-grade security standards.

- “Black box” nature hindering compliance assurance, decision tracking, or output accuracy verification.

- Inability to interact reliably with multiple enterprise systems to complete tasks.

- Deficiency in structured reasoning to execute precise business processes.

- Tendency to produce inaccurate or “hallucinated” content.

- Weakened confidentiality controls and compliance awareness.

- Inconsistent performance lacking stability, predictability, and version control.

Why Should You Build Your Own LLM?

Building your own LLM grants greater control over data security, model customization, and performance tailored to specific needs.

Key reasons to embark on building your own LLM include:

- Assurance of trust, ethical alignment, and bias control, ensuring your model operates within your organization’s ethical, legal, and compliance boundaries.

- Management of data security and privacy, as your proprietary model won’t rely on external servers, reducing the risk of data breaches and ensuring compliance.

- Customization and specificity control enabling training LLMs with your data, resulting in more accurate and relevant outputs for niche use cases.

- Full control and independence, allowing alignment of the LLM with your specific requirements and business goals (covering the model’s architecture, training data, and updates).

- Competitive advantage by creating unique services, products, or customer experiences, setting you apart from competitors.

- Potential cost savings at scale and in the long run, especially for high volumes of inference requests.

- Explainability, a crucial consideration for building your own LLM, aiding in maintaining end-to-end transparency and control, enhancing reliability in high-stakes domains, and ensuring data privacy and compliance.

Should You Train Your Own LLM or Use an Existing One?

You should opt for an existing LLM if rapid deployment is essential, as it’s more cost-effective and requires less technical expertise. Conversely, training your own LLM is advisable when domain-specific knowledge, high data privacy, or custom reasoning lacking in existing models are imperative.

Here’s a quick comparison table to help you decide whether to train your own LLM or utilize an existing one:

| Factor | Train Your Own LLM | Use General-Purpose LLM |

| Customization Need | High; need it tailored to specific data and needs | Limited; can work with general-purpose LLM |

| Data Privacy | Complete control over sensitive data | Potential exposure to third parties |

| Initial Cost | High due to training and infrastructure | Lower upfront cost |

| Time to Deploy | Longer; requires data preparation and training | Fast; ready-to-use with APIs |

| Performance | Optimized for specific tasks and domains | May lack domain-specific accuracy |

| Scalability | Needs infrastructure to scale | Scalable via cloud providers |

| Maintenance | Requires ongoing management and updates | Managed by service providers |

| Vendor Lock-In | Avoided; full ownership | Possible dependency on vendor |

| Flexibility | High; full control and adaptability | Limited customization options |

| Operational Cost | Potentially lower long-term costs | Pay-per-use or subscription fees |

A Step-By-Step Process to Build Your Own LLM

Developing a large language model entails several structured stages, from defining the purpose and curating datasets to training, deployment, and continuous enhancement. Below is an overview of a step-by-step process summarizing the construction of your own LLM:

Step 1: Define Objectives & Strategy

Prior to commencing the development of a large language model, establish what your LLM will accomplish, who will utilize it, and how you’ll measure its success.

Additionally, contemplate the type of solution you intend to create; be it a conversational assistant for internal support teams, an automated summarization engine for swift document review, or a code generator for developers.

Upon determining the purpose, set measurable KPIs early on. Concentrate on accuracy, response time, cost per query, and factual reliability